AI disclosure statement:

Listen to the story here:

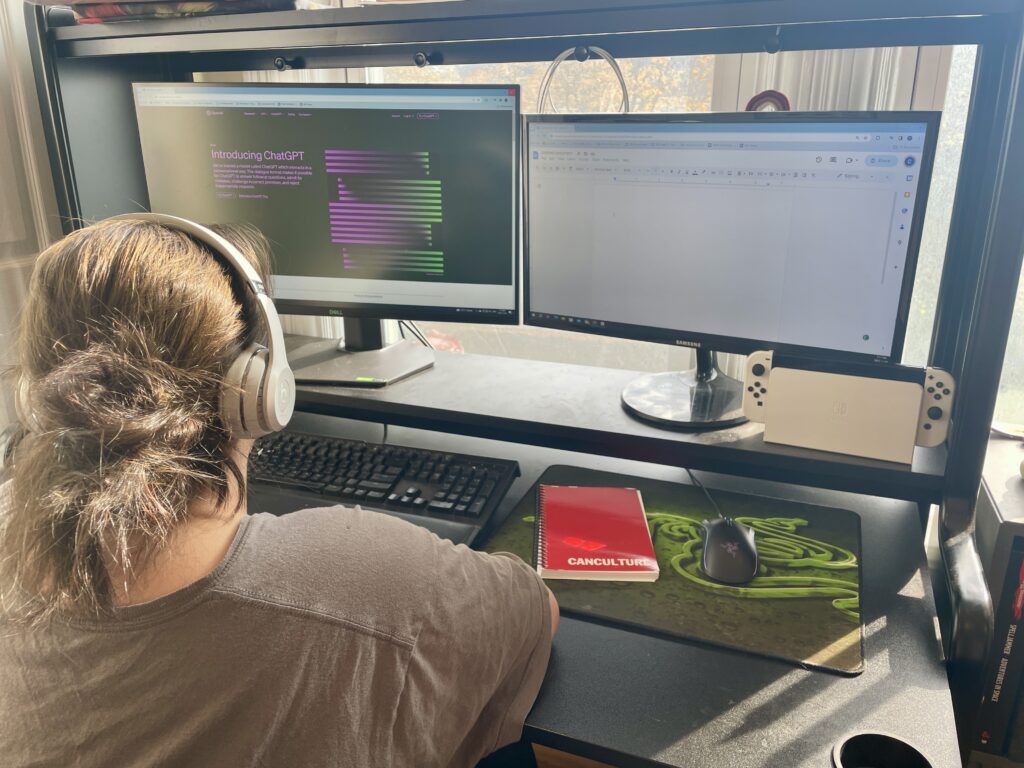

In recent weeks, two TMU student magazines — HerCampus and CanCulture Magazine — have received article submissions that the publications believe were written by artificial intelligence (AI).

As generative AI — a type of artificial intelligence that is capable of creating original content based on existing data on which it was trained— becomes more commonplace, news publications are examining the ways in which the technology should be implemented.

At CanCulture Magazine, editors received multiple articles which they believe were written by AI, including one listicle which featured multiple songs which did not actually exist.

HerCampus editor-in-chief Vanessa Tiberio said volunteers for the online publication were told at the start of the semester they were forbidden from using AI in their writing. Despite this, she believes an article submitted to her was written at least partially with the technology.

Tiberio, a third-year journalism student, has written for the publication since her first year and says that this is the first time she’s encountered any sort of AI usage at the magazine.

“I can’t remember it being an issue. When I was a junior editor [last year], ChatGPT was just coming out, so it wasn’t really a concern — it wasn’t as prevalent,” said Tiberio. “Coming into things [this year], I was a little more aware of it, especially as editor-in-chief because I’m the last person that sees an article before it reaches the world.”

Tiberio reached out to the writer regarding her suspicions and offered to discuss the matter further over the phone, but the writer declined to speak with her.

In July, the New York Times reported that Google is developing an AI that can produce news articles based on inputted information. In August, it was reported that the Associated Press is experimenting with generative AI, but “won’t use it to create publishable content and images.” And NewsGuard, an online organization that aims to combat digital media misinformation, has discovered at least 537 “unreliable AI-generated news websites” operating online.

TMU’s Academic Integrity Office has released guidelines surrounding the use of AI in classrooms, but they only extend to the university’s academics, meaning student publications are free to establish their own guidelines on how AI tools are used by their writers.

Apart from these more self-evident cases where articles explicitly mention non-existent material, one of the primary difficulties of monitoring AI usage is the inability to verify its use with a high degree of certainty. While online AI detection tools exist, they can be unreliable and pose potential privacy concerns. This leaves editors with the difficult task of confronting potential AI users directly about alleged uses.

“They did say that they didn’t use [AI], and it’s difficult to continue pushing back at that point because it’s definitely their word over mine,” said Tiberio. “Obviously, I did have my evidence that they used it, but at the end of the day, you can’t know for sure.”

Not all TMU student publications have experienced their writers using AI to create content, however. Some, including OTR, The Eyeopener, MetRadio and Intermission Sports, have all stated that they aren’t aware of any instances of their writers using artificial intelligence.

Negin Khodayari, editor-in-chief of The Eyeopener, says that the newspaper hasn’t had to deal with AI usage so far, because all their reported information is obtained by writers and editors, whereas AI-generated news typically gathers previously reported work from online databases.

“We’ve been lucky in that sense that we’ve never really needed to deal with it yet,” said Khodayari. “If it does come up in the future, I think the best way we could go about it would be sitting down with the writers and, first and foremost, just fact-checking everything.”

Khodayari explained that The Eyeopener doesn’t currently have a permanent policy on AI usage and that she would rather treat instances — should they arise — on a “case-by-case basis.”

Similarly, TMU’s campus radio station MetRadio said that they haven’t experienced AI usage by writers. In an email to On The Record, MetRadio station manager Elissa Matthews wrote:

“For our online content, we only accept written reviews of new films and albums, so it would be difficult to use AI for these as they do not have knowledge of things after 2021 (or so, depending on the model). In terms of on-air content for our news broadcasts […] people might be using AI to assist in script writing, although I kind of doubt it as the stiltedness of the AI would make it difficult to read.”

Mitchell Fox is the editor-in-chief of content at Intermission Sports, a student-led sports publication at TMU. Fox says that while he hasn’t experienced any writers use AI in their articles, he thinks that, in general, its use at the publication is a “no-go.”

Although Fox is against the usage of artificial intelligence to write news articles themselves, he acknowledges that it can still act as a tool in other ways for journalists.

“I think the use of AI to help journalism will continue,” said Fox. “I mean, we all use Grammarly, right? That’s basically AI. I use Otter.ai for transcribing articles.”

OTR’s editorial code of conduct reflects a similar position, stating that:

“It is not the policy of OTR to use AI software to generate content, but we may use AI software in assisting in the creation of content (like using Otter.ai for transcription or Grammarly for spell-check and grammar checks).”

Despite different experiences with AI usage and how the publications plan on responding to it, one common theme among the student publication’s editors emerged: Regardless of its potential uses, AI cannot replace the inherent humanity present in journalism.

“The human element is what makes an article for me; I love it when I have writers that have their own voice,” said Fox. “I have some that have a more humorous way of writing, and I love to see that — and AI can’t really do that.”

—

For transparency: Caelan Monkman is a managing editor with CanCulture Magazine.

Reporter with On The Record, fall 2023